What is autonomous testing?

Autonomous testing in DevOps

Software testing has always been a bottleneck. QA teams can't keep pace with modern release cycles, and manual testing only widens the gap—Autonomous testing changes that. AI handles test creation, execution, and maintenance automatically, so your team can focus on what matters.

Don't take our word for it. Read the latest research to see where autonomous testing is headed and what it means for your organization.

Autonomous testing

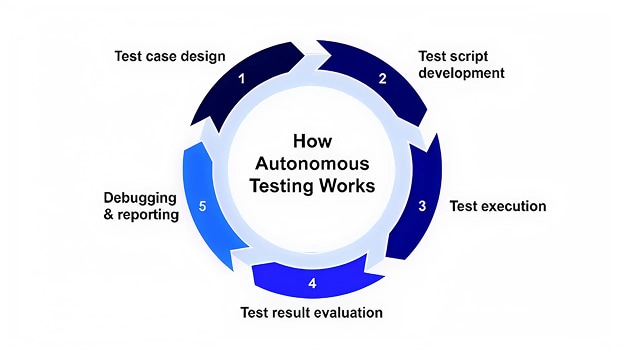

How autonomous testing works

Autonomous testing platforms combine several technologies to mimic how a human tester thinks and acts:

- Application mapping: The system crawls through your application, documenting every element, workflow, and interaction. It builds a complete picture of how things connect and what users can do.

- Intelligent test generation: Using that map, the platform creates test cases that cover critical paths, edge cases, and common user behaviors. It prioritizes based on risk and usage patterns rather than testing everything equally.

- Self-healing test automation: When UI elements change (a button moves, a field gets renamed), the system recognizes the change and updates the test automatically. No more brittle scripts that break with every minor update.

- Continuous learning: The platform watches test results over time. It learns which tests catch real bugs, those that waste time on false positives, and where new coverage gaps appear, adjusting accordingly.

All of this runs in the background while your team focuses on other work. The system flags issues when they appear and provides context about what went wrong and why it matters.

What are the benefits of autonomous testing?

The value proposition sounds good on paper, but what does it mean for your team day-to-day?

Most QA teams are stretched thin, juggling regression testing, new feature validation, bug reproduction, and test maintenance all at once. Something always gets deprioritized. Autonomous testing doesn't just make existing work faster; it fundamentally changes what's possible with the same headcount and budget.

Teams that adopt autonomous testing report a shift in how they think about quality. Instead of testing being a gate that slows everything down, it becomes an enabler that gives developers confidence to move quickly. The QA team stops spending 80% of their time on repetitive execution and maintenance, and starts focusing on exploratory testing, user experience validation, and strategic quality planning.

Here's what that shift looks like in practice:

- Faster release cycles: Tests run continuously without waiting for someone to manually trigger them. Feedback loops shrink from days to minutes, so teams can push updates confidently and frequently.

- Better coverage with less effort: The system explores paths that humans might overlook or deprioritize. It tests combinations that would take weeks to script manually, all while requiring minimal setup time.

- Reduced maintenance burden: Test maintenance typically consumes 30-50% of a QA team's time. Autonomous testing cuts that dramatically because tests fix themselves when the application changes.

- Consistent quality standards: Human testers have good days and bad days. They miss things when rushed or tired. Autonomous systems maintain the same thoroughness regardless of deadline pressure or team bandwidth.

- Lower skill barriers: You don't need specialized automation engineers to build and maintain test suites. Team members with basic testing knowledge can set up and manage autonomous testing platforms.

What about challenges? How do you resolve them?

Autonomous testing isn't a magic solution that works perfectly out of the box. Teams run into real obstacles during adoption, and pretending otherwise does nobody any favors. The good news is that most challenges have straightforward solutions once you know what to expect.

Here are the most common issues and how successful teams solve them:

- Initial setup complexity: Getting started requires upfront effort to train the system on your application. Many teams underestimate this learning period.

- Resolution: Start with a small, stable part of your application. Let the system learn that thoroughly before expanding into more complex areas. Most platforms show value within 2-3 weeks of focused setup.

- Trust in automated decisions: Teams worry about letting AI decide what to test and when. What if it misses something critical?

- Resolution: Use autonomous testing alongside human judgment, not instead of it. Review generated test plans initially, then gradually increase autonomy as you build confidence.

- Integration with existing tools: Your team already uses specific CI/CD pipelines, bug trackers, and monitoring tools. Adding another system creates friction.

- Resolution: Choose platforms with strong API support, pre-built integrations, and that fit into your workflow.

- Handling dynamic content: Applications with constantly changing data or personalized content can confuse test systems that expect predictable behavior.

- Resolution: Modern platforms handle this through pattern recognition rather than exact matching. They understand "this is a product name" rather than "this must say 'Blue Widget 2000.'" Look for systems with strong dynamic content handling in their documentation.

- Cost justification: Autonomous testing platforms represent a real investment, and ROI isn't always immediately obvious.

- Resolution: Calculate your current test maintenance hours, multiply by hourly cost, project it over 12 months, and include the cost of bugs missed by manual testing. Most teams see payback in 6–9 months.

How do AI and test automation come into play?

People often confuse automation with autonomous testing, but there's a meaningful difference. Traditional test automation follows rigid scripts: "Click here, type this, check that." It's powerful but fragile. Change one element and the whole script breaks.

Autonomous testing uses AI to make those scripts smarter and more resilient. Here's where it matters most:

- Computer vision: Instead of relying solely on HTML IDs or XPath selectors, AI-powered systems use visual recognition to find elements. They see the "Login" button the way a human would, even if the underlying code changes.

- Natural language processing: Some platforms let you describe tests in plain English, e.g., "verify that users can complete checkout with a saved payment method." The system translates that into executable tests without requiring code.

- Pattern recognition: AI identifies common workflows and anti-patterns across your application. It spots when multiple pages follow similar logic and generates tests accordingly, avoiding redundant work.

- Predictive analytics: By analyzing historical data, the system predicts which areas are most likely to break and focuses on testing them. It's smarter about resource allocation than a human could be while juggling dozens of priorities.

- Anomaly detection: When something looks off (performance suddenly degrades, error rates spike, user flows change unexpectedly), the AI flags it even if no specific test failed. This catches issues that traditional testing misses entirely.

The test automation handles execution and the AI handles intelligence. Together, they create testing that scales without linear increases in cost or effort.

What is projected for autonomous testing in the future?

The technology has matured quickly, but we're still early. Here's where things are headed:

- Predictive test generation: Systems will analyze code commits and automatically generate tests for new features before developers finish writing them. Testing starts keeping pace with development rather than lagging behind.

- Self-optimizing test suites: Platforms will continuously prune redundant tests, merge overlapping coverage, and rebalance execution based on real-world usage data. Test suites will stay lean and relevant without manual curation.

- Cross-platform intelligence: Your web tests will inform mobile tests and vice versa. The system will recognize when a bug in one platform is likely to exist in others and proactively check them.

- Production testing integration: Autonomous testing will blur the line between pre-production and production environments. Systems will safely test in production using real user patterns, synthetic users, and intelligent traffic sampling.

- Collaborative AI testing tools: Rather than working in isolation, autonomous testing platforms will communicate with each other, sharing learnings about common vulnerabilities, effective test patterns, and emerging risk areas.

By 2027, most development teams will treat autonomous testing as infrastructure, the same way they treat CI/CD pipelines today. It won't be a special tool that requires expertise. It'll just be how testing works.

Can OpenText help us?

Your testing strategy needs to keep up with your digital journey. Your applications are getting more complex, release cycles are speeding up, and you need intelligent test automation that can adapt automatically.

Autonomous software testing helps you scale quality management without constantly hiring more people. Instead of manually fixing test scripts every time something changes in your apps, OpenText’s AI-powered testing tools learn and adjust themselves. Self-healing test automation means your QA team can focus on the work that matters instead of endless maintenance.

Modernize your testing with AI testing tools that align with your quality strategy. Explore how DevOps testing automation and continuous testing in CI/CD pipelines can clear your testing bottleneck and speed up releases.

Ready to see what autonomous testing can do for your team? Learn more about intelligent testing solutions that adapt to your applications and workflows.

Resources

-

Pick n pay

Major retailer accelerates software testing and increases test automation with OpenText™ Core Software Delivery Platform and OpenText™ DevOps Aviator™

-

Independent health leveraging AI

Reduce mobile test maintenance by 35% while rapidly managing application changes with OpenText™ Functional Testing AI-based capabilities

-

Major insurer

Accelerates vital tests on straight-through processing with the latest AI capabilities embedded in OpenText™ Functional Testing, saving time and effort, and reducing training requirements

-

Credit agricole payment services

Financial services company modernized software testing and introduced AI with OpenText

-

Roche diagnostics

OpenText Functional Testing AI capabilities improve regression testing times by 90% and enhance test coverage while aligning with corporate DevOps delivery